Design the Road for the Car

AI's brittleness is an environment problem, not a model problem.

For most of my life, self-driving cars have felt 5 years away. They still mostly do. The models have always been more capable than people assumed. The real problem has been the road.

Cars had the same problem a hundred years earlier. They existed in the early 1900s but did not take off until the roads caught up. Paved roads spread through the 1920s. The Interstate Highway System came in the 1950s. The car did not get dramatically better in those decades. The road did.

I worked on self-driving as an engineering student: the DARPA Grand Challenge at Berkeley, the Mars Rover at the Jet Propulsion Laboratory, and self-driving cars at Carnegie Mellon. The cars were technically capable of more than the streets allowed. The bottleneck was the world around them — pedestrians moving unpredictably, lane markings fading, construction changing the map overnight, intersections built for human judgment rather than machine reliability.

Immature technology performs surprisingly well inside a constrained environment. If we had redesigned roads for autonomous vehicles, with standardized markings, embedded sensors, and controlled intersections, we probably would have had reliable self-driving earlier with simpler systems.

I keep coming back to that lesson when I build AI products. The road, not the car, decides what AI can do.

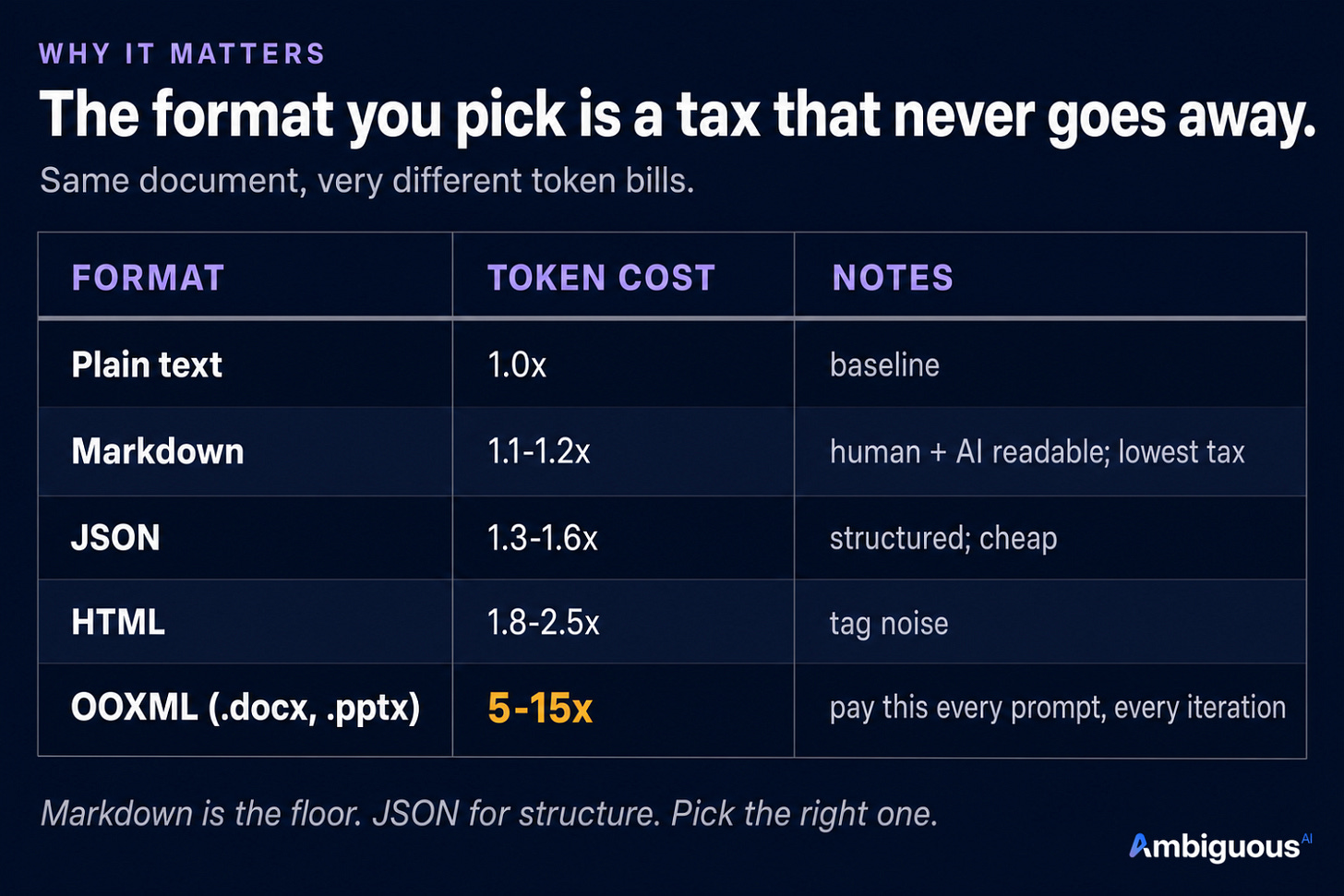

The token tax

Documents are the first place this shows up. The same office document can cost up to fifteen times more in OOXML than in plain text, and the model pays that bill on every prompt.

Plain text is the baseline: 10K tokens per document. Markdown runs about 1.1 to 1.2 times that. JSON runs 1.3 to 1.6. HTML jumps to 1.8 to 2.5. OOXML, which powers Microsoft Office, runs 5 to 15 times the baseline: 50K to 150K tokens for the same content.

A document that costs 12K tokens in one format can cost 150K in another. At that scale, the choice is not about a price point. It is whether the model holds the entire project in context, or sees one file at a time.

The format you pick is a tax that never goes away. It gets paid on every prompt, paid again on every iteration, and paid a third time when coherence breaks under context pressure.

The intermediate-format trap

The second place this shows up is accuracy. Today’s state-of-the-art models do not edit office documents directly. They write JavaScript representing the document, then convert it to .pptx.

The pipeline is: prompt → JavaScript → .pptx. Each conversion is where errors can compound. A heading meant to be level two becomes level three. A footer drifts a few pixels. Most of the time the output looks fine.

Editing an existing .pptx is worse. The model has to round-trip the file from .pptx back into a JavaScript representation it can reason about. That conversion is lossy by design. Whatever the original author put in becomes approximations that land in the next iteration.

I have seen this before. Back at the Grand Challenge, we were retrofitting a motorcycle. The teams that finished had lidar and a chassis built from the ground up. Retrofit only gets you so far.

It is a great local maximum. Most production AI products run this stack, and most of the time it is good enough. But it cannot reach the next level without a new approach.

The way out of a local maximum is not a stronger model. It is a different system.

The unreasonable choice

Both of these — the token tax on every prompt, and the accuracy loss on every edit — are environment problems, not model problems. A stronger model still has to read OOXML. A stronger model still has to round-trip through a JavaScript representation that loses a little on every pass.

George Bernard Shaw said it cleanest:

The reasonable man adapts himself to the world; the unreasonable one persists in trying to adapt the world to himself. Therefore all progress depends on the unreasonable man.

The reasonable path takes the formats as given and asks the model to work harder inside them. The unreasonable path redesigns the formats themselves. Roads for cars cost a lot. We built them anyway. The car could not get any better until we did.

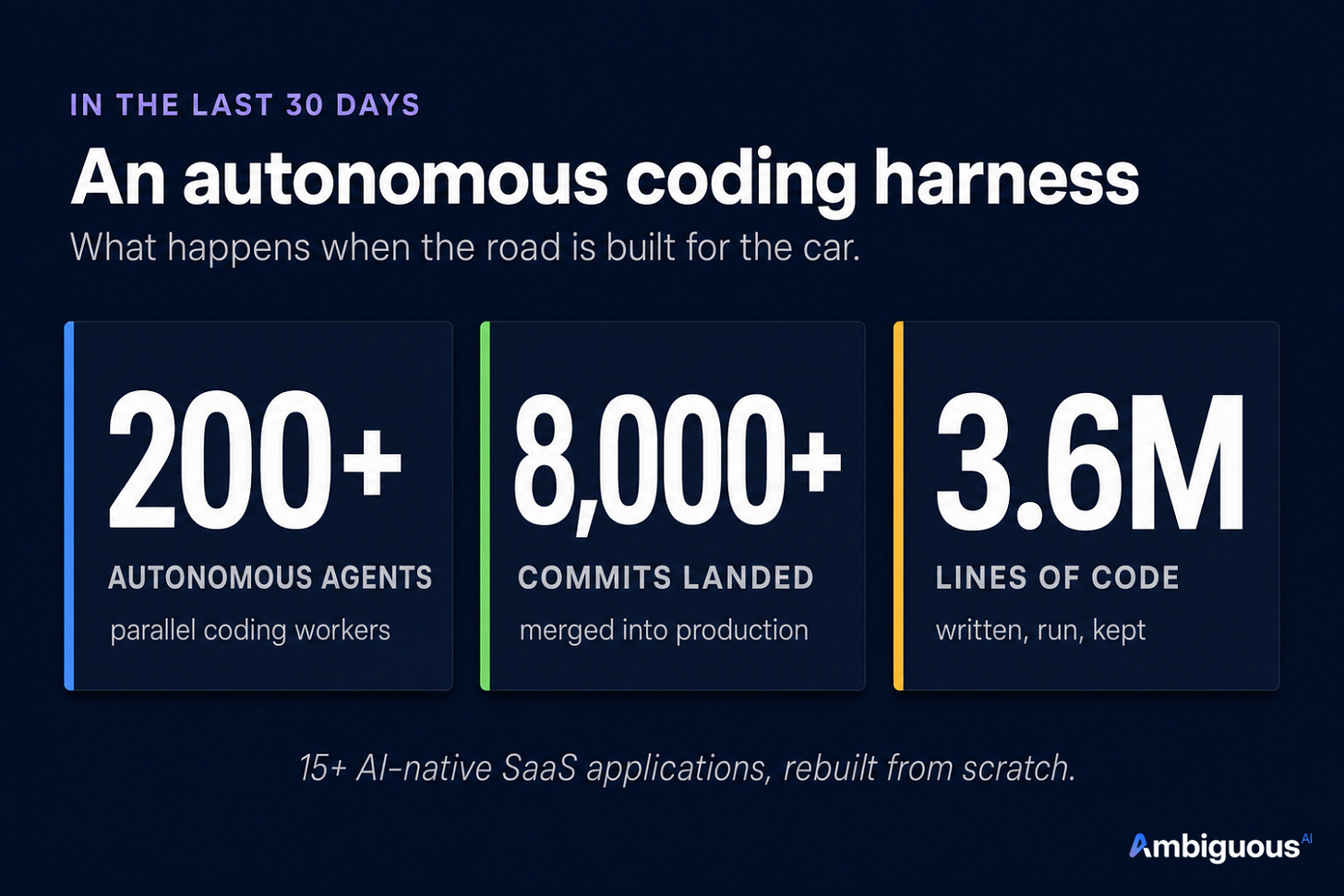

We took the unreasonable path. In the last 30 days, we ran 200+ autonomous coding agents, landed 8,000+ commits, and wrote 3.6M lines of code. We were able to rebuild 15+ AI-native SaaS applications from scratch that are human-AI interoperable (more to come soon).

Every surface is a peer. CLI, API, MCP, and human UI all hit the same source of truth, so the agent and the human work on the same object with no translation layer between them.

Internal representations are chosen for token efficiency and AI accuracy first, legacy compatibility second. The format you pick is a tax that never goes away, so pick the right one.

Every action by a human or an agent has revision history and a rollback. When something goes wrong, the action is reversed and the cause identified.

That is what a productivity stack built for AI looks like: the documents the model reads, the formats it edits, the interfaces it works through. Control the environment, not the model.

What this unlocked

Our first product took eight months. Our current product reached the same level of complexity and had a comparable code base in just seven days. The model did not get 30× better in those eight months. The road did.

This is what happens when the workspace itself is the road. The model operates at full strength, not fighting translation layers.

The quieter question

We spend a lot of time asking whether the model is good enough. The quieter question is whether the environment is.

Iteration drift, hallucinated citations, lossy edits, exploding context costs: these read as model limitations. Often they are environment limitations. A stronger model still spends energy fighting bad roads. A cleaner environment lets an ordinary model look unusually capable.

Design the road for the car.